The Biological Floor

Why AI semis look cheap if you believe inference replaces labor

Despite Q1 FY26 guidance of $78 billion, an over 70% YoY growth, NVDA’s forward price-to-earnings multiple has contracted to ~21.4x.

This is significantly below its average of ~39.1x over the past five years. Obviously there have been macroeconomic headwinds that are partially at fault here, but I think NVDA’s fall from higher valuations is interesting.

Looking forward, I am still bullish on GPU demand as demand for compute will only grow from here:

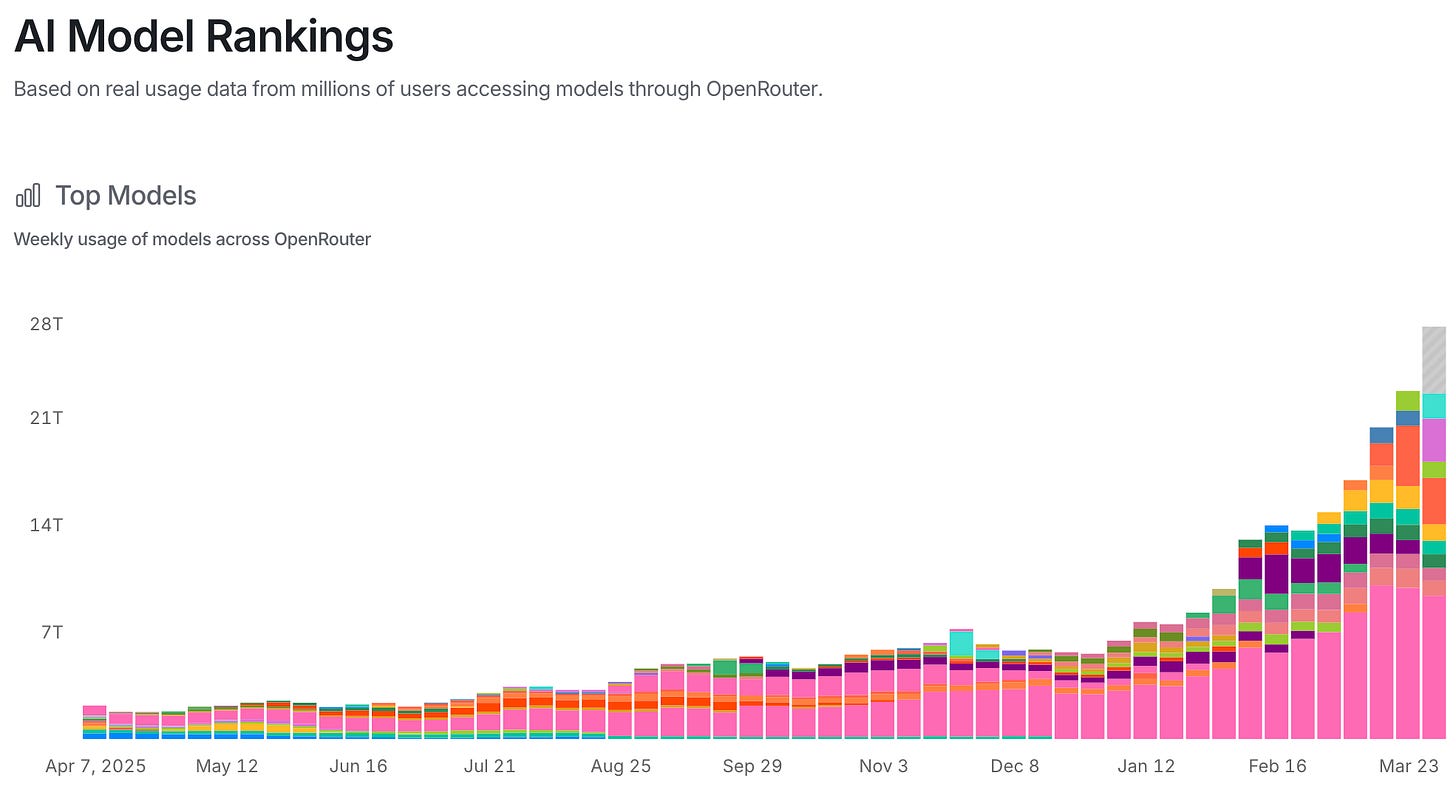

In roughly a year, weekly token usage has gone from ~2.18T tokens to ~22.6T tokens. I only expect this to grow in the near term, especially as large companies are pressured to reduce headcount — inference demand will grow as companies downsize.

This is something I want to devote a full post to in the future, but in looking at the future of white collar work I think a few things are becoming clear:

Junior/early career roles are largely disappearing

Mid-level employees will become vastly more efficient or will be part of the layoffs

Economic rents will shift from humans to models/applications.

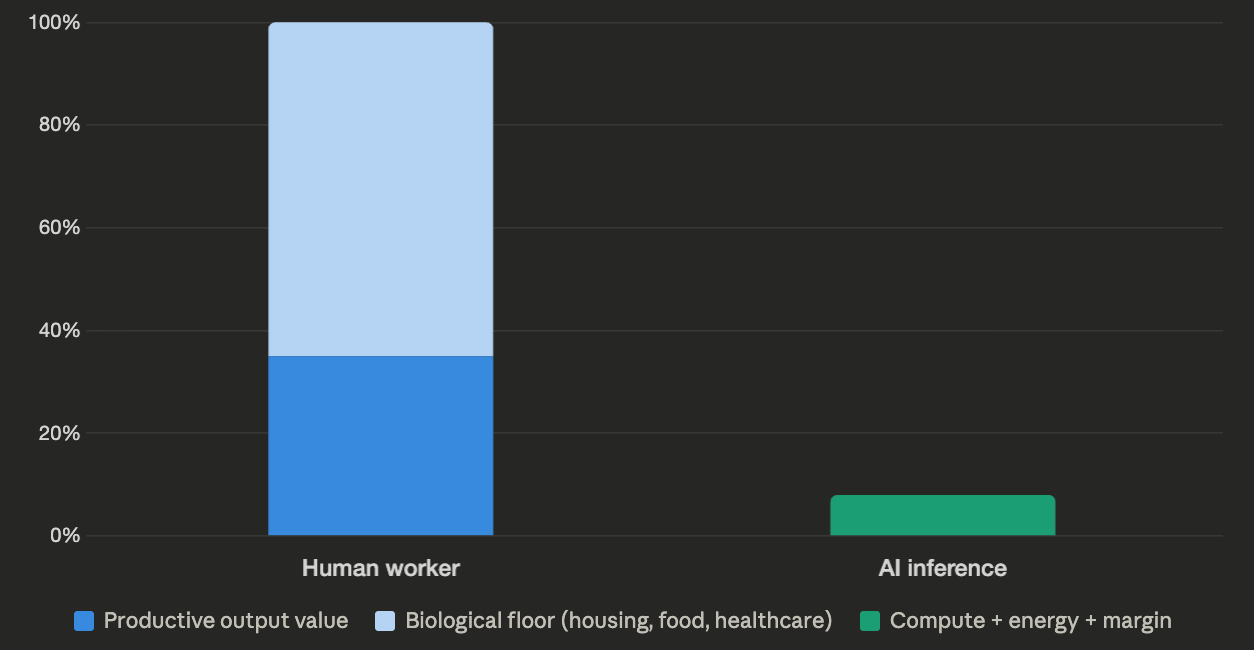

The third point is probably the most defensible. When you pay an employee a market rate, that rate has a biological floor — it has to cover housing, food, healthcare, and everything else a human needs to survive. That floor props up labor costs across the board, regardless of the actual productive output of the worker. Inference has no such floor. The cost of a unit of AI labor is compute, energy, and a margin to the model provider — and all three are deflationary over time. When you remove the biological floor from the cost of cognitive work, margins expand structurally, and every company will be pressured by shareholders and basic capitalism to exploit that gap.

What’s important to mention is that this assumes a certain level of bullishness on AI progress. If you’re in the camp that AGI is coming, whatever that means for you, then you likely agree with this assessment. If you have a more conservative view on AI, it’s possible that this calculus won’t be done for a long time.

With that caveat aside, it’s clear that in this scenario, money will flow from firms needing inference to the model companies, namely OpenAI and Anthropic, and those companies will need compute, likely from Nvidia. Today, Nvidia trades at roughly 21x forward EPS, a multiple that assumes datacenter revenue growth decelerates meaningfully from here. But if inference demand follows the trajectory of token consumption, which has grown ~10x in a year, then current forward estimates are likely too conservative.

It’s generally accepted that GPUs have depreciation cycles of about 3-5 years, and Nvidia is also innovating on their products on a regular basis. If compute continues to ramp up, and we see mass adoption of inference as a replacement for labor, I fail to see how datacenter spend slows in the near term.

Other (seemingly) Cheap Stocks in this Scenario

Micron, SK Hynix and Samsung are all glaring buys in the scenario that data center spend continues at this pace over the next 3+ years.

Micron ($MU) trades at 4x forward PE

SK Hynix at 4x forward PE

Samsung at 6.8x forward PE

“Markets are efficient bro..”

This is probably the thing that scares me the most being long a few of these names. The market is generally pretty efficient, and if it’s not moving on these forward estimates, it’s worth asking why rather than assuming everyone else is wrong.

For the memory names specifically, the market has real reasons to be cautious. Export controls on China remain a moving target, any tightening hits Samsung and SK Hynix directly. HBM is a cyclical product category with a history of brutal oversupply corrections, and the market has been burned before. There’s also a legitimate question about whether hyperscaler capex is sustainable at this pace, or whether we’re approaching a period where the Mag-7 pull back on spending as they digest existing capacity.

For Nvidia, the discount likely reflects a combination of those same capex sustainability fears plus the growing threat of custom silicon — Google’s TPUs, Amazon’s Trainium, and Microsoft’s Maia all represent real long-term share risk, even if Nvidia’s competitive position today is dominant.

I don’t think any of these risks are trivial. But I do think the market is pricing them as near-certainties rather than possibilities. If even half of the inference demand thesis plays out, these names are mispriced. All of this is to say that if you are a believer in AI, there are ways to play that and be exposed to that upside, but you should be watching what the market is telling you.

Disclaimer: This is not financial advice, do your own due diligence please.